H5P is a collection of HTML5 widgets for adding interactivity to educational websites which ships with an interface for configuring these same widgets. Thus, it’s a web-based tool for building web-based tools. The code itself is open source. But, unless you’re able to stand such things up by yourself, putting it to use has required signing up for an account at H5P.org–home of the free version–or H5P.com–home of the monetized version.

Fair enough. This is a common business model for open source software. There are, however, a few problems. First, H5P.com licenses are, to my mind, fairly expensive. And the H5P.org site currently contains only three of the nearly sixty widgets and is so slow and buggy as to be almost useless.

Enter Lumi.education, a tiny company out of Germany who decided that the way around these issues would be to create a desktop editor for H5P (Lumi H5P Desktop Editor) that’s capable of exporting to (currently) three formats: 1) SCORM package, 2) All-in-one HTML file, and 3) One HTML file and several media files. The app is Electron-based, which means the small Lumi team has been able to provide versions for Windows, Mac, and Linux.

Test Run

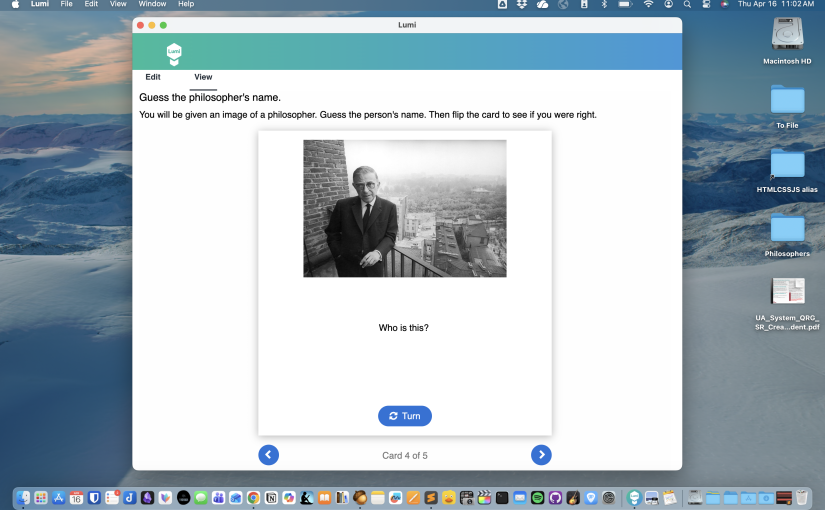

I tested the Mac Silicon version of the editor on my M1 MacBook Pro. Here’s a sample “Dialog Cards” interaction I built as a test case. I created it based on some of my favorite philosophers. It’s an example of the All-in-one HTML file export option. It works pretty well. The export dialog gives you some options for constraining size, which likely would have helped, but I took the defaults.

I tried to stand it up inWordPress using a custom HTML widget, but that failed. So I just created a folder for it on my server and uploaded it directly. At work, I tested it using the HTML widget in Blackboard Ultra. It worked great in that context.

I tried the multi-file export as well. That worked fine. Taking the defaults, it generated a file named “Untitled.html” along with a folder named “Untitled” which contained a subfolder titled “images” containing the the images I used for the flip cards with randomized names (e.g., “image-RRw65Ri1.jpg”). Whatever name you specify at export becomes the name of the index file and the support folder. I tested it locally and it worked well.

So far, I’ve only tested the SCORM output in Blackboard Ultra. It generates an error when I import it. Specifically, it says “Some issues were found with this course which may affect playability.” The upload competes and the SCORM works exactly like the all-in-one HTML version does, but it never generates a SCORM completion status. So, there are obviously some kinks still to be worked out, at least with the SCORM export option.

Impressions & Issues

I have a lot of enthusiasm for this app. I think that H5P is a good tool with a good suite of interactive widgets that are useful in online education. But it’s underused because H5P.com licenses are expensive and most instructional designers lack the time and technical savvy to grab it from GitHub and set it up on their own server. H5P.org, the official home of the project, is no help in this regard, as it exists only to whet the appetite of potential users and funnel them toward H5P.com, the commercial solution.

The current version of Lumi is perfectly workable for designers who don’t need SCORM exports.

But the app is still rough around the edges. First off, in this age of autosave, Lumi’s editor not only doesn’t implement autosave but also provides no warning to save if you exit nor any indication that you might want to. I learned this the hard way. Secondly, it can take a long time to launch or even to switch from edit mode to preview mode. As with the save issue, there’s no indication that it’s loading. You just double-click the icon and it eventually opens. There’s no progress bar. You might be tempted to quit and relaunch it. Resist that temptation.

However, once it’s up and running, it’s quite pleasant to work with. I like it better than the web-based versions provided by H5P. But, if you prefer a web-based version, Lumi has one of those as well (Lumi Cloud), for free or pay, if you’d like to support the project. I uploaded my PhilosophersFlipCards.h5p file (the save format for the Lumi H5P Desktop Editor) to Lumi Cloud and deployed it on their server. It was simple to get it up and running.

I’m going to keep an eye on this project and reach out to the developers about these issues. I see a lot of potential in it.

If you’re interested in H5P, see my next article in the series, where I test the H5P WordPress Plugin.